Black Hat 2025: Security Catalyst Recap

In Las Vegas, GPUs are not the only thing overheating. So are its hackers.

Las Vegas in August is always intense, but this year the real heat came from breakthroughs not just in cyber tools, but in how we think about offense, defense, simulation, identity, and human sustainability under pressure all under the large cloud cover of AI (obviously). The Decibel team was out in full force hosting a series of events from the Vibes & Cocktails happy hour with the awesome Mike Privette from Return on Security, a Women in Cyber brunch and our usual Founder Oasis suite where founders can hang out and take a breather from the craziness of the BlackHat Expo. Over these glorious few days inside the Mandalay Bay hotel, here are some of the biggest topics and themes that were discussed:

The First AI-in-Cyber Use Case has matured: Toil Reduction

It's taken a lot of hype cycles, but we've finally hit a clear, repeatable AI use case in cybersecurity: getting rid of soul-crushing toil. Sublime Security's ADE (Autonomous Detection Engineer) is a prime example: automating the grunt work of detection engineering so humans can focus on higher-order problems. Dropzone AI is doing the same for the SOC, taking Tier-1 alert handling and triage from hours to minutes without sacrificing quality. The value is obvious because the before/after delta is so stark. No philosophical debates about "will it work". It's already working.

The timeline tells an important story about enterprise AI adoption. Edward Wu, founder of Dropzone AI, first introduced the concept of investigating alerts using LLMs at our Founder Oasis at RSA in March of 2023, only a few months after ChatGPT was released. Dropzone was born in August 2023, and while he long proved that its AI was effective for alert investigations, the human acceptance, trust, and willingness to delegate alerts and reduce toil took until recently to fully materialize. AI SOC dominated every conversation on the expo floor at Black Hat 2025. For me, this marks the moment we can officially declare victory on AI-powered toil reduction in security. What was once a pilot in many enterprises has become a mainstream operational reality.

This raises a critical question for the broader AI-in-security landscape: now that we've moved past the foundational debate of whether AI works in security contexts, how much faster will we adopt other AI use cases? The answer likely depends on whether those use cases can demonstrate the same stark before/after delta that made toil reduction so compelling. With trust barriers lowered and operational patterns established, I think the adoption curve for subsequent AI security applications should accelerate significantly

Proudly Offensive — Deterrence in the AI Era

After this year’s RSA, I wrote an article about "Proudly Offensive", an idea on how in an AI world, security insights increasingly come from offense, not just from watching and waiting. Historically, security posture leaned heavily on detection, with just enough response to feel proactive. Organizations built their security programs around the assumption that visibility and monitoring would give them the edge they needed.

But AI fundamentally changes this calculus. We're already seeing operational proof: startups using AI agents to dominate bug bounty programs, and more concerning, full end-to-end data exfiltrations that run autonomously from initial access to final payload delivery. The offensive capabilities of AI have moved from theoretical to operational.

Bug bounties are a useful proving ground. They demonstrate that AI can find vulnerabilities faster than humans and execute complex attack chains with minimal human oversight. But the real question is whether we can build offensive cyber capabilities that matter when geopolitical tensions escalate. What happens when the call comes in: "We need you to help"?

This is where deterrence becomes front and center. The organizations and nations that figure out how to weaponize AI for cyber operations will have strategic leverage. We're seeing early signals in American defense investments: companies like Palantir and Anduril building AI-powered capabilities at scale, not for corporate penetration testing, but for real-world adversarial engagement.

This shift is technical, political, and extisential. In an AI-driven threat landscape, deterrence requires demonstrable offensive capability. The willingness to develop, deploy, and when necessary, unleash AI-powered cyber operations will define which nations maintain strategic advantage in the coming decade. American dynamism built the internet, scaled cloud computing, and created the AI models powering this revolution.

The next chapter won't just reward those who can defend better, it will reward those who can project power through AI when called upon to do so.

Simulation Is All You Need (…Almost)

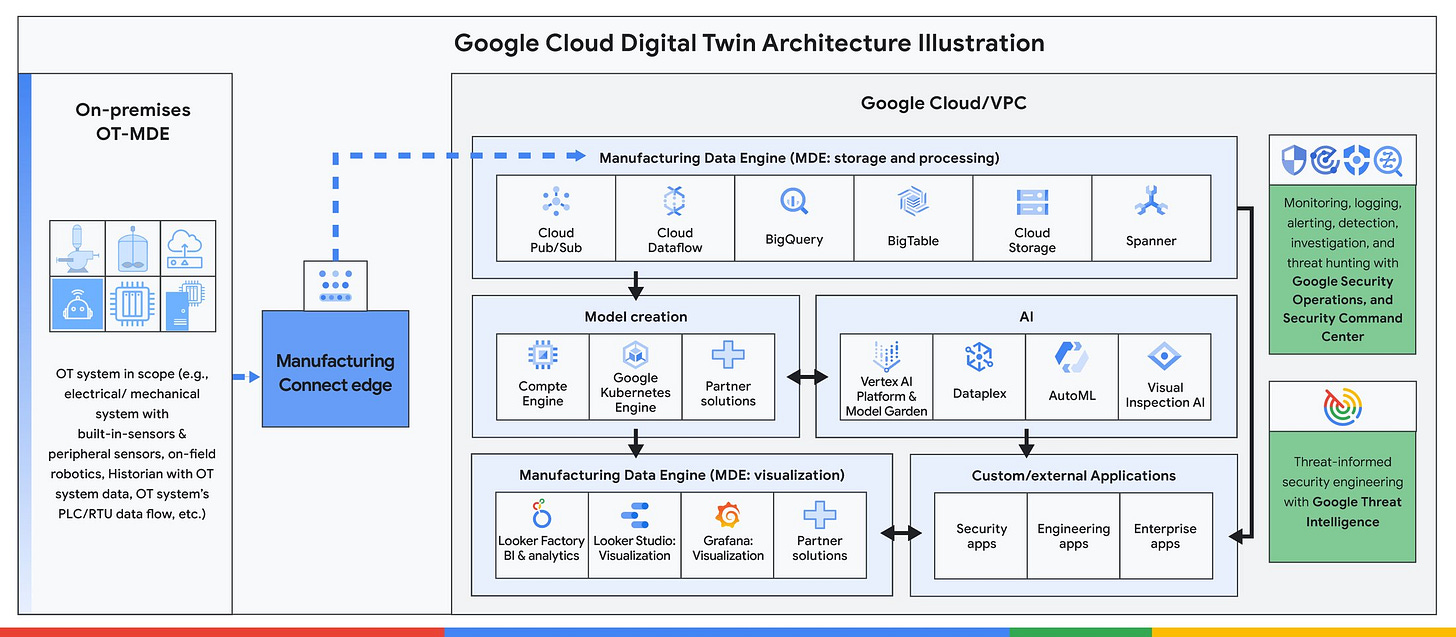

One of the more interesting threads was the growing role of digital twins in cyber. Enterprises are interested in building high-fidelity replicas of systems and networks to safely run security experiments, train AI models, and test defenses. This Dark Reading piece on this is worth a read. Today, these simulations excel at controlled, discrete scenarios perfect for training or red-team-blue-team exercises. But the leap to truly continuous, real-time "mirror worlds" of live enterprise environments is still constrained by data freshness, fidelity, and cost.

The most compelling advancement is how these twins are evolving beyond simple network topology modeling to capture dynamic interactions between applications, cascading effects of component failures, and environmental factors that influence security posture. Google shared the actual complexity required in their approach: real-time data streams, comprehensive monitoring capabilities, and computational power to model both normal operations and adversarial scenarios simultaneously.

The next evolution will likely involve AI agents operating within these simulated environments. While today's digital twins rely on human red teams manually probing for weaknesses, the future points toward autonomous agents that could continuously explore attack paths, test defensive responses, and discover novel vulnerability combinations at machine speed. We're not there yet, but the foundation is being built.

This sort of rehearsal logic combined with operationalized threat intel (like GLACIAL PANDA's long-duration persistence) can let defenders explore edge cases and policy effectiveness before reality does. It's a cyber range that no longer approximates, but lets you fight today's adversary with your own specific blueprint.

Other Blackhat Thoughts

Social Engineering - Phishing, Vishing, really all the -ishings

The narrative is changing from phishing campaigns to quantifying and modeling human risk in real-time. The old security awareness training model of quarterly compliance checkboxes is dead. In its place, we're seeing human risk management emerge as a control layer that feeds behavioral telemetry into identity systems, conditional access policies, and even insurance underwriting (I wrote about this recently!). According to Crowdstrike, “Vishing attacks increased 442% from the first to the second half of 2024 and the number of vishing attacks in the first half of 2025 have already exceeded the total number seen in 2024.”

This means that authentication must evolve beyond static credentials to dynamic behavioral baselines and step-up validation that adapts to the sophistication of AI-driven social engineering. The threat is substantial and requires a different approach (See Push’s Phishing Detection Evasion Techniques Matrix) now that adversaries can clone voices or generate convincing deepfakes in real-time that adapt mid-conversation to bypass detection systems and human intuition alike.

Security for AI

Acquisitions continued this year. Protect AI’s acquisition was announced during RSA and SentinelOne announced its intent to acquire Prompt Security at the start of Black Hat. This reminds me of Evident.io’s acquisition in the early cloud days. The vendors getting acquired today are the ones creating the security controls that make AI deployment viable at enterprise scale. In the past year, solutions have moved from simple model governance to AI security platforms that can detect prompt injection, monitor model behavior drift, and protect training data pipelines. But the question is whether or not this is the end game solution to Security for AI since we don’t even know the full attack surface yet.

From Application Security to Product Security

There's a subtle but important shift happening in how organizations think about security ownership. Traditional AppSec focused on finding vulnerabilities in code. Product Security embeds security thinking into the entire product lifecycle: from design decisions to feature rollouts to customer-facing security controls. This isn't just semantic evolution; it's organizational. Product Security teams report to product leadership, not just security leadership, and they're measured on customer outcomes, not just vulnerability counts. AI companies are attacking this problem at different stages: Prime Security (this year's Black Hat Startup Champion) focuses on design-stage risk analysis before code is written, making the call that security needs to be woven into the product development process itself.

Final Thought

Black Hat 2025 made it clear: AI in security is no longer just a thought experiment, it’s operational, shaping how we reduce toil, test defenses, and even think about deterrence. From the expo floor to our own events, the energy, ideas, and debates reminded me why this community is so special. Huge thanks to all the security practitioners who joined us, and to our friends across the Decibel family and beyond; it was truly great to catch up! Looking forward to seeing you all again soon…ideally somewhere with a milder climate.

PS: A big shoutout to my good friend Sean Sun (founder of Miscreants) for hosting an incredible (and much needed off strip) dinner with so many friends, teachers and creators in the security community that I have admired for a long time as I have been a student of the security industry.

Love it! Great summary!